The A/B Test Cohort Report and Dashboard provide detailed insights into test results and the performance of testing campaigns. The report tracks user assignments by the date they were added to a test and helps identify the most successful group. The dashboard complements the report by offering key metrics and a cumulative analysis view for a deeper understanding of test results. Together, these tools enable data-driven decision-making and the optimization of A/B testing campaigns. Key features include:

A/B Test Cohort Report Features:

- User Assignment Tracking – Monitors when users are assigned to a specific A/B test (assignment day).

- Group Performance Comparison – Evaluates multiple test groups to determine the most successful one.

- Parent and Nested Campaign Breakdown – Supports tracking at both the parent campaign level and the nested (child) campaign level. Read more about Parent and Nested Campaigns in the Understanding Parent and Nested Campaigns article.

- Conversion and Monetization Metrics – Provides data on in-app purchases, engagement, and retention for each test group.

- Granular Data Segmentation – Enables filtering by cohort day, test groups, and campaign structures.

A/B Test Cohort Dashboard Features:

- Aggregated Test Insights – Displays key A/B test metrics in a centralized dashboard.

- Cumulative Data View – Allows analysis of metrics over time to observe trends and long-term effects.

- Customizable Metrics Display – Users can select key performance indicators relevant to their test evaluation.

- Interactive Data Exploration – Provides drill-down capabilities for in-depth test result analysis.

- Performance Optimization Support – Helps refine A/B testing strategies based on real-time insights.

The A/B Test Cohort Report is designed to analyze the performance of A/B testing campaigns. The report aggregates data starting from the assignment day, when the user was allocated to a test group. This allows for tracking experiment dynamics and identifying which group performs better.

The report tracks metrics for two levels of campaigns:

- Parent Campaign – The main campaign that may contain one or more nested campaigns or exist without them.

- Nested Campaign – A child campaign within a parent campaign. All transactions and key performance indicators (KPIs) are attributed specifically to the nested campaign.

This structure allows a detailed analysis of each test group’s performance, enabling data-driven decision-making.

Read more about Parent and Nested Campaigns in the Understanding Parent and Nested Campaigns article.

Let's assume Parent Campaign A had 10 impressions and contained two Nested Campaigns, B and C, each offering different in-app purchase prices. In this case, the report would display the data as follows:

Parent Campaign | Impressions |

|---|

Parent A | 10 |

Parent Campaign | Nested Campaign | Impressions |

|---|

Parent A | Nested B | 10 |

Parent A | Nested C | 10 |

Below is a detailed list of the primary filters and dimensions available in the report, each accompanied by a description to clarify its purpose and usage:

Filter and Dimension | Description |

|---|

App | The name of the application. |

A/B Test Name | The name of the A/B test for campaigns launched within the scope of A/B testing. |

A/B Test Group | The name of the group for campaigns launched within the scope of A/B testing. |

Day of Assign to A/B Test | The date when the user was assigned to the selected A/B test. If a user installed the app earlier than they were assigned to the test, all data between the installation date and assignment date is excluded from the report. |

Week of Assign to A/B Test | The week when the user was assigned to the selected A/B test. If the app was installed earlier than the assignment, all data between installation and assignment is excluded. |

Day Index | The index of the campaign viewing day. For example, on the installation day, the Day Index = 0. |

Monetization Type | The type of monetization the campaign belongs to. Possible types include: - Inapp Monetization: In-app purchases

- Ad Monetization: Interstitials, rewarded video, and banners

- Subscription Monetization: Subscriptions

- Non-Monetization: Actions unrelated to monetization, such as rate and review, and notifications

- Cross-promotion Monetization: Cross-promo

|

Country | The user's country. |

App Version | The version of the application. |

Campaign Type | The type of campaign, such as Banner, Interstitial, Rewarded Video, External, Subscription, LTO (Limited-Time Offer), Cross-promo, Rate and Review, among others. |

Parent Campaign | The name of the parent campaign, which may include one or more nested campaigns. |

Nested Campaign | The name of the nested campaign within the parent campaign. Transactions are attributed specifically to nested campaigns. |

Event Name | The name of the event associated with the impression, click, or purchase. |

Event Number | The sequential counter of the event, which remains consistent throughout the app's lifecycle. |

Product ID | The ID of the product. |

Product ID Type | The type of product. |

Creative | The creative asset used in the campaign. |

Subscription Status | The user’s status at the time of view/click/purchase, indicating whether they have an active subscription. |

In-App Status | The user’s status at the time of view/click/purchase, indicating if they are free, paying, or unknown. When making the first purchase, the user’s status changes from free to paying. |

Parameters | Custom parameters sent along with the event. |

Below is a detailed list of the primary metrics available in the report, each accompanied by a description to clarify its purpose and usage:

Metric | Description |

|---|

Impressions | The number of campaign impressions for the selected cohort. |

Clicks | The number of campaign clicks for the selected cohort. |

Trial Started | The number of trial activations within the selected date range. |

Trial Converted | The number of conversions to paid subscriptions after the trial ends for the selected user group. Refers to the subscription creation date based on RevenueCat data. |

First Payment | The number of paid subscription activations within the selected date range. |

Renews | The total number of subscription renewals, including initial renewals, for the selected cohort. Renewals are counted starting from the date the subscription campaign was shown. Data includes renewals within 180 days from the campaign display date. |

Purchases | The total number of in-app purchases for the selected cohort. |

Revenue | Depending on parameters, this may include revenue from banner, interstitial, rewarded ads, subscriptions, and in-app campaigns. Subscription revenue is calculated starting from the campaign display date, covering additional subscription-related events for up to 180 days. |

Ads Revenue | The total ad revenue for the selected cohort. |

In-apps Revenue | The total in-app revenue for the selected cohort. |

Subs Revenue | The total subscription revenue for the selected cohort. |

CTR, % | Click-through rate calculated as Clicks / Impressions. |

pCVR, % | The percentage of impressions that converted to first payments. |

tCVR, % | The percentage of impressions that converted to free trials. |

CVR, % | Conversion rate calculated as Purchases / Clicks. |

I2I, % | Impression-to-install conversion rate calculated as Purchases / Impressions. |

eCPM | Effective cost per thousand impressions calculated as (1000 * Revenue) / Impressions. |

Rewarded Impressions | The number of nested_rewarded campaign impressions for the selected cohort. |

Rewarded eCPM | Revenue earned per 1,000 impressions for nested_rewarded campaigns. |

Active Users | The number of unique client IDs participating in the selected campaign. |

Rev/Active User | Revenue generated per active user. |

IMPAU | Impressions per active user, calculated as Impressions / Active Users. |

To analyze a test, select the desired time period in the filters corresponding to the test duration, the application, and the name of the test that was launched. Once these parameters are set, you can compare the obtained metrics across the test groups based on the chosen dimensions. This approach allows for a detailed evaluation of test performance and group comparison.

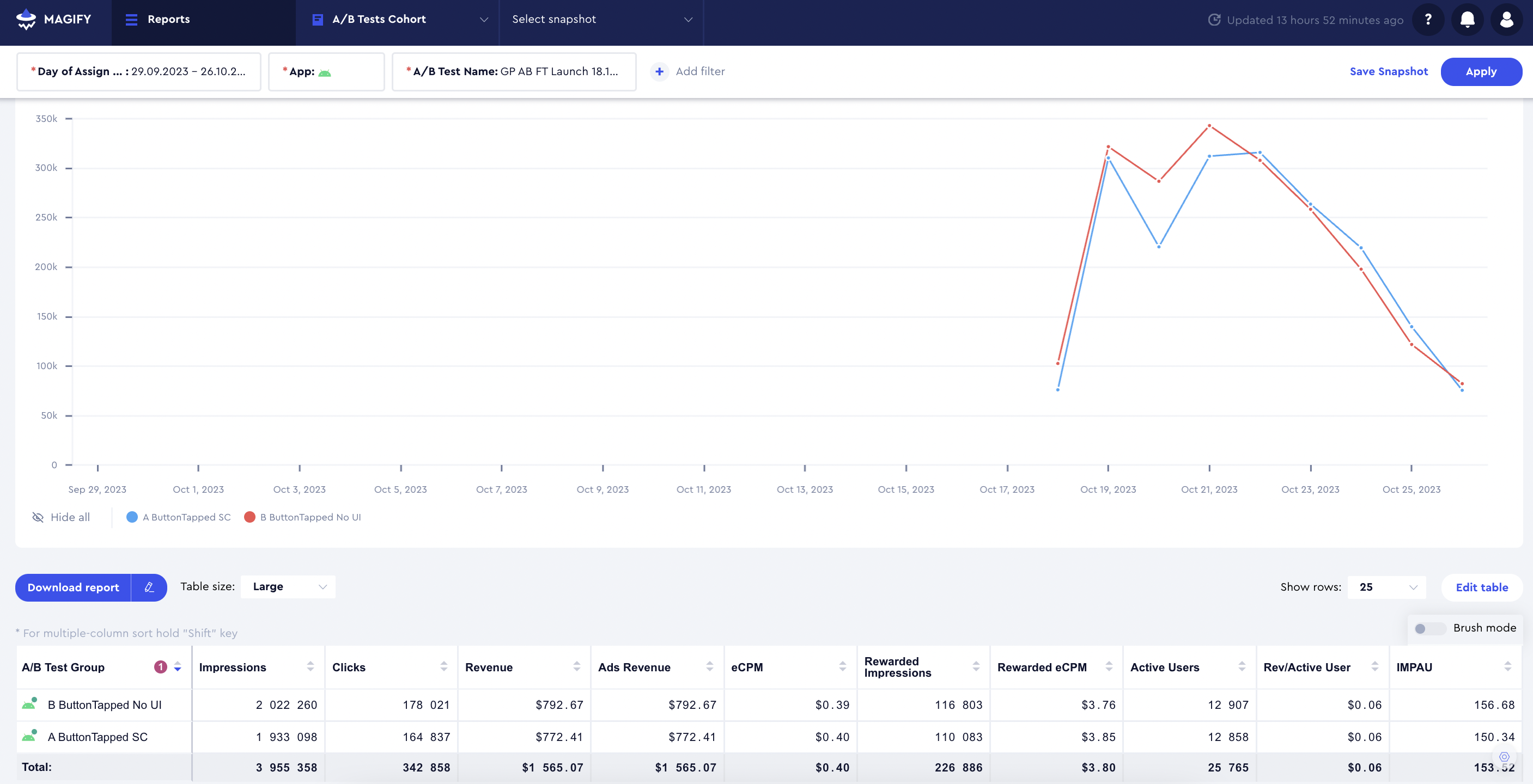

The A/B Tests Cohort Dashboard includes key metrics for analyzing A/B tests from the A/B Tests Report. Additionally, some of these metrics can be viewed in a cumulative format, providing deeper insights into test performance over time.

Below is a detailed list of the primary filters and dimensions available in the dashboard, each accompanied by a description to clarify its purpose and usage:

Filters and Dimensions | Description |

|---|

App | The name of the application. |

A/B Test | The name of the A/B test. |

Country | The user's country. |

Below is a detailed list of the primary metrics available in the report, each accompanied by a description to clarify its purpose and usage:

Metric | Description |

|---|

ARPU Cumulative (Assigned) | Revenue from in-app, subscription, and ad generated by all assigned users divided by the cohort size. Cohort size refers to users assigned to the test for more than M days since assignment to day X. |

Median Session | The median session duration. |

Average Session | The average session duration. |

ARPU Cumulative (Participated) | Revenue from in-app, subscription, and ad generated by participating users divided by the cohort size of A/B tests. Cohort size refers to users participating in the test for more than M days since assignment to day X. |

AD ARPU Cumulative (Participated) | Ad revenue generated by all participating users divided by the cohort size of A/B tests. Cohort size refers to users for whom more than M days have passed since assignment to day X. |

iARPPU Cumulative | Revenue from in-app and subscription (based on data from the validator by Magify. Alternatively, we support data validation from RevenueCat) divided by the number of users generating this revenue. Applies to users for whom more than M days have passed since assignment to day X. |

% of Paying Users Cumulative | The percentage of users who purchased any product (in-app or subscription) after M days from assignment up to day X. |

Renews Cumulative | The cumulative number of subscription renewals for the selected test up to day X (trial converted and first payments excluded). |

Paid Subscription Activation Cumulative | The cumulative number of non-trial subscriptions activated for the selected test up to day X. |

Trial Converted Cumulative | The cumulative number of trials converted to paid subscriptions for the selected test up to day X. |

Trial Started Cumulative | The cumulative number of trials started for the selected test up to day X. |

In-apps Cumulative | The cumulative number of in-app purchases for the selected test up to day X. |

In-apps Avg Check Cumulative | In-app Revenue Cumulative divided by In-apps Cumulative. |

Subs Revenue Cumulative | The cumulative total of subscription revenue for the selected test up to day X. |

In-apps Revenue Cumulative | The cumulative total of in-app revenue for the selected test up to day X. |

Revenue Cumulative | The cumulative total of in-app, subscription, and ad revenue for the selected test up to day X. |

Impressions Cumulative | The cumulative number of banner, interstitial, and rewarded impressions up to day X. |

Impressions per DAU | The number of banner, interstitial, and rewarded impressions on day X divided by the DAU on day X for users assigned to the test. |

Retention Cumulative | The number of users active on day M (as specified in the metric) from the time of assignment up to day X. |

Sessions per DAU | The number of sessions on day X divided by the DAU on day X for users assigned to the test. |

DAU | The number of users assigned to the test who were active on day X. |

Audience Participated Cumulative | The cumulative number of users who participated in this test, accumulated up to day X. |

Audience Participated | The number of users who participated in this test, meaning they received the test configuration and saw the A/B test campaigns. |

Audience Assigned | The number of users assigned to this test, meaning they received the test configuration but did not necessarily see the A/B test campaigns. |